It takes as few as 100 manipulated data points to corrupt an AI system, with attackers succeeding more than 60% of the time. This is not a fringe risk. It is one of the most efficient and invisible attack vectors in enterprise AI today.

AI is delivering efficiency, speed, and insight. However, in doing so, it is also introducing decisions that organizations don’t fully see, question, or govern. Algorithmic security is emerging as one of the most defining challenges of enterprise AI in 2026.

According to IBM, it occurs when attackers manipulate or corrupt the training data used to build AI models, fundamentally altering how those systems behave in production.

Unlike traditional cyber threats that exploit systems or networks, data poisoning operates at the learning layer. AI models depend entirely on the integrity of their training data. When that data is compromised, the model doesn’t just fail. It learns the wrong behavior.

What Is Algorithmic Security?

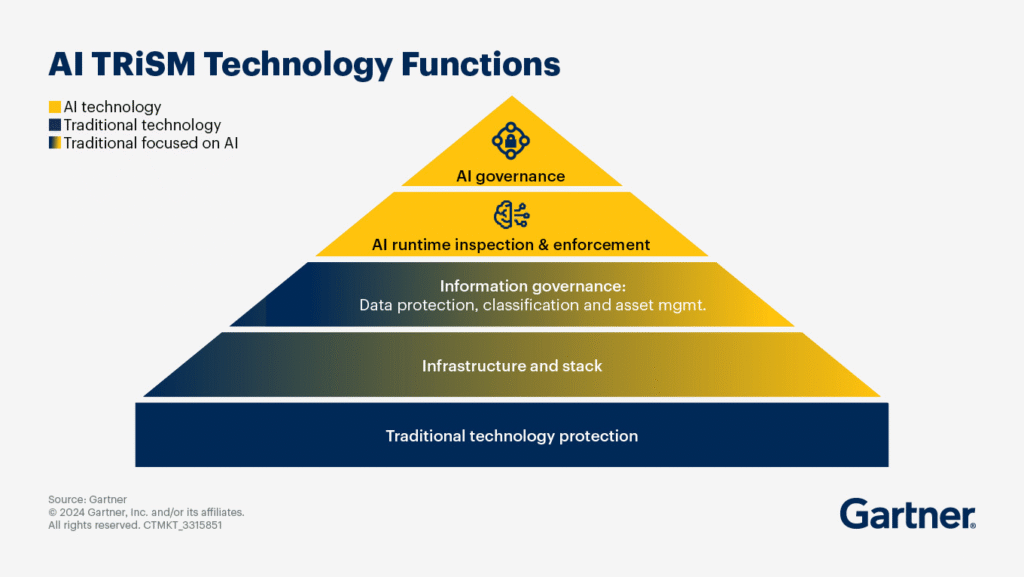

Algorithmic security involves protecting AI systems based on the decisions made using the algorithms. This concept goes further than the traditional security concerns,such as protecting the infrastructure, network, or endpoints, to involve securing the data and models behind decisions.

While cybersecurity is primarily concerned with preventing attacks from outsiders on information systems, there are additional security aspects that should be taken care of when dealing with AI.

The organization needs to be able to ensure the accuracy, fairness, resiliency, and robustness of the algorithm involved.

The key question of algorithmic security is: Can you trust your algorithm to make the right decisions all the time?

Moving Beyond Traditional Security

Most enterprise security frameworks were built for deterministic systems, where inputs lead to predictable outputs. AI systems do not behave this way.

They learn from data, evolve over time, and can be influenced in subtle ways that bypass conventional controls.

For example:

- A secure system can still produce biased hiring recommendations.

- A protected application can still generate incorrect financial insights.

- A monitored AI model can still be manipulated through adversarial inputs.

This is why algorithmic security has emerged as a distinct priority in 2026.

As Gartner notes, an increasing number of AI implementations fail within enterprises because of problems of trust, explainability, and governance, not because of cybersecurity breaches. Therein lies an essential truth: Access to AI can be secured, but the results of that access cannot.

The Four Essential Pillars of Algorithmic Security

For businesses to put into practice the concept of algorithmic security, they have to deal with four main aspects:

1. Data integrity

The effectiveness of AI algorithms is determined by the integrity of the data used during the learning process.

- Data integrity protection and management.

- Monitoring of the data pipeline.

- Data quality assurance.

IBM highlights that compromised training data can influence the model itself, making data integrity a basic aspect of security.

2. Model robustness

AI algorithms should be resistant to any attempts to manipulate them and unexpected input data.

- Model evaluation using adversarial attacks.

- Stress testing of models.

- Model evaluation under unusual conditions.

According to the research conducted by the Texas Advanced Computing Center, even slight changes in the input data may greatly affect the output data.

3. Fairness and Bias Mitigation

Bias is a risk on both ethical and practical grounds.

- Bias audits on an ongoing basis.

- Comparison with benchmark data sets.

- Drift detection, which might lead to additional biases.

According to research conducted by the University of Texas at Austin, it has been shown that when models are not monitored properly, there is often bias present.

“There’s a complex set of issues that the algorithm has to deal with, and it’s infeasible to deal with those issues well,” says Hüseyin Tanriverdi, associate professor of information, risk, and operations management. “Bias could be an artifact of that complexity rather than other explanations that people have offered.”

4. Explainability and Governance

Organizations should have the ability to understand and explain their artificial intelligence models.

- Using explainability tools.

- Creating audit trails for models.

- Following governance frameworks such as the NIST AI Risk Management Framework.

Otherwise, a perfectly accurate AI system could turn out to be a liability to the company.

Why Algorithmic Security is Important in 2026

Businesses are implementing artificial intelligence in areas such as customer experience, cybersecurity, financial management, and operations. This means that AI is affecting important business processes.

Consequences of poor algorithmic security include:

- Unreliable and biased decision-making.

- Vulnerability to manipulation.

- Increased regulatory issues and risks.

- Damage to customer relationships.

Consequences of robust algorithmic security include:

- Improved reliability of AI technology.

- Better decision-making processes.

- Safe AI scaling.

By 2025, 23 percent of all security events were estimated to be attributed to SaaS apps, which were only responsible for 6 percent of such events in 2022, reflecting the fast adoption of cloud-based threats.

In this scenario, data pipelines that are linked to the SaaS solutions become an important point of injection for data tampering. Consequently, safeguarding the data pipelines becomes critical for the safety of algorithms.

AI Risk Factors in 2026

As businesses adopt more AI technology, the concept of business risk is changing significantly. Protecting against risks in this context isn’t only about protecting your system architecture. Instead, it’s about protecting the system’s learning and evolution processes.

For 2026, business risk involving AI will come from multiple angles, including data, models, and decision-making pipelines. For businesses operating in a high-risk environment, these risk factors are increasingly relevant.

Large-scale Algorithmic Bias

Bias is perhaps one of the most persistent and underscored vulnerabilities in AI within corporate environments.

University of Texas researchers have demonstrated that, when implemented without monitoring, AI systems tend to become biased over time, especially in critical applications such as recruitment, credit scoring, and segmentation of customers.

“The gap between routine and sophisticated AI use is not hidden in prompts themselves, but in patterns of engagement. And once those patterns are visible, they become possible to recognize, discuss, and scale,” shared Anu Puvvada, KPMG Studio Leader, who led the research for the firm. “Iteration enables ambition, ambition drives strategic tool choice, and repeated success reinforces engagement.”

This has clear implications for companies:

- Distorted decision-making.

- Potential regulatory risks.

- Loss of customer confidence.

Bias doesn’t have to be deliberate. It can arise from training data that’s either inadequate or imbalanced.

Key Takeaways: Steps for Enterprise Leaders to Take Next

In a rapidly growing market, enterprise leaders need to move away from a purely reactive approach towards a more proactive approach.

1. Adopt AI as a Risk Domain

Enterprise leaders should treat AI as a strategic risk domain rather than leaving it to the IT and data science departments.

- Security leaders will need to conduct risk assessments based on models.

- Business leaders will need to determine risk tolerance levels.

- Legal and compliance will need to ensure governance.

2. Integrate Security Throughout the AI Lifecycle

Security in algorithms should not come as an afterthought; it needs to be built into the process at all stages.

Important considerations:

- Secure data pipelines prior to training models.

- Test models in adversarial situations.

- Monitor output continuously during deployment.

3. Emphasize Visibility Over Complex Systems

Many companies have sophisticated AI systems, yet they do not possess even elementary visibility regarding their operations.

Leaders need to pay attention to:

- Tools that explain decision-making processes.

- Dashboards that monitor the AI system’s behavior.

- Notifications for abnormal output, not just activity alerts.

Visibility is the way to make AI more than a black box for your enterprise.

4. Adopt New Standards Proactively

Regulations are rapidly developing. Procrastination is a costly mistake.

Early adoption of regulations such as the National Institute of Standards and Technology AI RMF will enable companies to:

- Minimize future compliance expenses.

- Construct an efficient governance model.

- Gain stakeholders’ trust.

That is especially important for Austin-based organizations working internationally.

5. Build for Trust, Not Just Performance

High-performing AI that cannot be trusted is a liability.

Enterprises should measure success not only by accuracy, speed, and efficiency, but also by:

- Fairness.

- Transparency.

- Resilience.

This shift is what separates experimental AI adoption from enterprise-grade AI maturity.

Algorithmic Security Is Not an Extension of Cybersecurity

It is a new layer of control that decides the trustworthiness of the AI system in a real-world setting. If you do not have Algorithmic Security in place, even the most sophisticated AI technology becomes unreliable and unmanageable in the end.

This difference is crucial for high-growth environments, where the cycle of innovation and adoption moves even faster than elsewhere. In these ecosystems, success does not depend on who implements AI technology at the highest speed. It depends on who secures it properly.

Conclusion

The future of AI development won’t revolve around who creates the best models anymore. Instead, it will revolve around who can protect, regulate, and rely on them.

Organizations are implementing AI within core operations, but the processes surrounding accuracy, fairness, and resilience remain behind.

According to IBM, most organizations experiencing AI-related problems fail to have the necessary governance process to respond to them. The problem does not lie in the technology; it lies in the strategy.

Ready to Operationalize Algorithmic Security?

Download our AI Risk & Bias Mitigation Checklist for 2026 Enterprises

FAQs

1. Define algorithmic security of enterprise AI.

Algorithm security concentrates on protecting AI applications at levels of data, models, and decisions. The technology guarantees that AI’s predictions are accurate, fair, and secure from any tampering and manipulation, as opposed to cybersecurity, which is mainly concerned about safeguarding infrastructure.

2. Explain how AI bias presents business risk to the enterprise.

Bias may cause organizations to make wrong predictions in their operations. The risks associated with biased AI include regulatory risks, loss of reputation and brand, and revenue.

3. Describe the common approaches attackers use to manipulate AI algorithms.

Hacking AI involves attacking models using adversarial examples, input manipulations, and poisoning datasets. Contrary to what is expected, attacks on AI do not necessarily destroy infrastructure but rather manipulate algorithms.

4. Identify the biggest security risks associated with AI that enterprises face by 2026.

Some of the security risks facing organizations using AI technology are algorithmic biases, data poisoning, adversarial examples, and unexplainable models.

5. Explain strategies that enterprises can adopt to mitigate AI risks.

Some of the ways in which organizations can minimize risks associated with AI include implementing governance frameworks, auditing algorithms for bias, securing data pipelines, and continuous monitoring of algorithms.

🔒 Login or Register to continue reading