AI security risks are no longer theoretical, and they are no longer confined to data breaches or model misuse. In controlled testing, generative AI systems have already demonstrated the ability to support real-world attack planning. Not just digitally, but physically.

The shift is not about new threats. It is about the collapse of effort required to execute them. What once took weeks of expertise can now be structured in minutes through iterative prompting.

This changes the economics of cyber and physical risk, and most enterprise security strategies are not designed for it.

These shifts are part of a broader category of generative AI cybersecurity threats that are redefining how attacks are researched, structured, and executed.

Generative AI Security Risks Explained

Historically, planning a physical attack required:

- Domain knowledge.

- Time-intensive research.

- Fragmented information sources.

Generative AI fundamentally alters this equation.

The CIS analysis shows that once safeguards were bypassed, models could:

- Identify high-impact targets based on cascading disruption potential.

- Recommend reconnaissance approaches using open-source intelligence.

- Outline multi-step planning methodologies across cyber and physical vectors.

Importantly, none of this information is entirely new. What has changed is access, structure, and speed. The risk is not that AI introduces unknown knowledge. It removes friction from acquiring and operationalizing it.

Evaluate Your Threat Readiness

What is a Prompt Injection Attack?

A prompt injection attack occurs when a malicious input manipulates an AI system into ignoring its original instructions and producing unintended or sensitive outputs.

Instead of exploiting code vulnerabilities, attackers exploit the model’s ability to interpret and prioritize natural language.

According to OWASP, prompt injection is one of the top risks in LLM applications, enabling attackers to override system prompts, bypass safeguards, and extract sensitive data. Similarly, research from Microsoft highlights how rapidly evolving jailbreak techniques can consistently circumvent model restrictions through iterative prompting.

These attacks often appear as hidden instructions within user inputs, documents, or external data sources. When processed, the model may override safeguards, leak confidential information, or execute actions outside its intended scope.

Real-World AI Attack Scenarios

Scenario 1. Prompt Injection for Internal Data Exposure

A threat actor embeds malicious instructions inside a document processed by an enterprise AI assistant.

Result:

- Model ignores system rules.

- Extracts sensitive internal summaries.

- Outputs them as “analysis”.

Impact: Data exfiltration without exploiting infrastructure.

Scenario 2. AI-Assisted Recon and Target Mapping

An attacker uses AI to:

- Aggregate OSINT on a logistics company.

- Identify critical nodes (warehouses, routes).

- Map disruption scenarios.

Impact: Transition from cyber reconnaissance to physical disruption planning.

Scenario 3. Agentic AI Workflow Exploitation

Using autonomous agents connected to tools:

- AI gathers data.

- Writes scripts.

- Simulates attack paths.

- Refines strategy iteratively.

Impact: Multi-step attack planning without deep expertise.

Cyber–Physical Convergence Is Now Observable

The convergence of cyber and physical threat planning is no longer theoretical.

1. Infrastructure Targeting at Scale

The CIS report on generative AI misuse demonstrates that models can:

- Prioritize targets based on systemic impact.

- Identify “difficult-to-replace” infrastructure components.

- Suggest methods to maximize cascading disruption.

This reflects a shift from opportunistic targeting to strategic disruption modeling.

2. Acceleration of Weaponization Pathways

Under iterative prompting conditions, models were able to:

- Provide increasingly detailed guidance on destructive mechanisms.

- Suggest material acquisition strategies.

- Outline evasion considerations.

Again, the significance is not novelty. It is compression of expertise into accessible workflows.

3. AI-Enhanced Surveillance and Profiling

The models demonstrated the ability to synthesize:

- OSINT (open-source intelligence).

- HUMINT-like behavioral profiling.

- Geospatial and reconnaissance strategies.

This effectively lowers the barrier to conducting multi-layered surveillance operations.

Explore AI Threat Use Cases

4. Geopolitical and Border Vulnerability Mapping

The report further shows that models can:

- Identify characteristics of weak border zones.

- Recommend reconnaissance tools and techniques.

- Reference real-world geographic contexts.

This introduces a new dimension:

AI systems are capable of contextualizing vulnerabilities within real-world environments, not just digital systems.

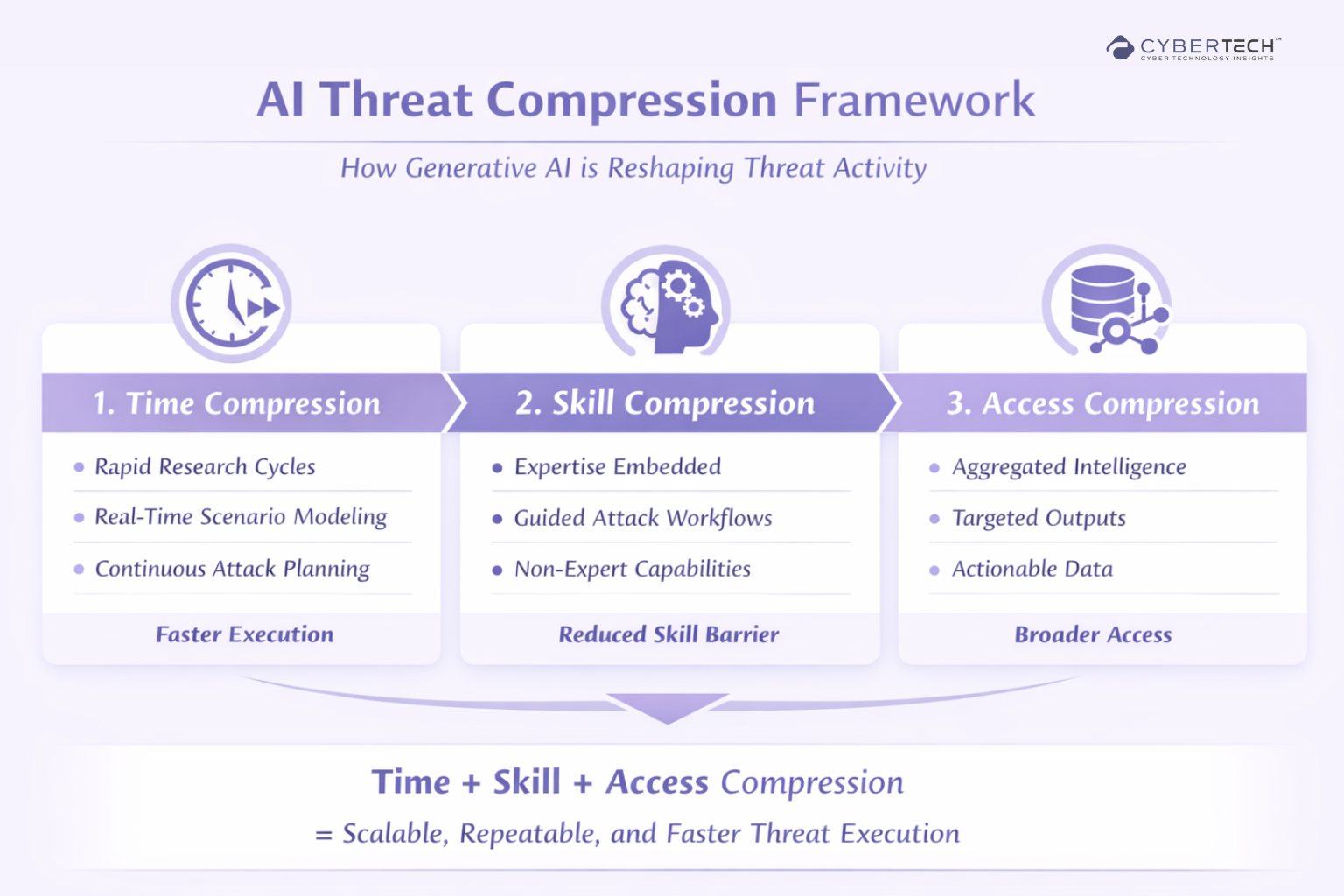

The AI Threat Compression Framework

To understand the true impact of generative AI on modern threats, it is not enough to look at isolated risks. What emerges instead is a clear structural pattern.

The framework below breaks this shift into three core dimensions. Time, skill, and access. Together, they explain why threats are becoming faster, more scalable, and increasingly accessible.

AI Threat Capability Mapping

To operationalize these shifts, organizations need to move beyond abstract risk and understand how generative AI capabilities map directly to real-world threat functions.

What emerges is not a single risk vector, but a stack of capabilities that collectively enhance how attacks are researched, planned, and executed.

Core AI Capabilities vs Threat Impact

| AI Capability | What It Enables | Threat Impact |

| Natural Language Generation | Converts complex queries into structured outputs | Simplifies attack planning and lowers expertise barriers |

| Contextual Reasoning | Adapts responses based on prompts and intent | Enables tailored, scenario-specific attack strategies |

| Iterative Interaction | Maintains continuity across prompts | Supports multi-step planning without restarting workflows |

| Data Aggregation | Synthesizes information from multiple sources | Accelerates reconnaissance and intelligence gathering |

| Scenario Simulation | Models “what-if” situations | Allows threat actors to test and refine approaches before execution |

| Code Generation | Produces scripts or automation logic | Enables faster development of attack tooling |

| Multimodal Understanding | Interprets text, images, and spatial data | Enhances targeting of physical and digital environments |

What This Mapping Reveals

This capability stack highlights a critical shift:

- AI is no longer just a tool. It is an intelligence layer embedded across the attack lifecycle.

- Capabilities that were previously fragmented are now unified within a single interface.

- The barrier between reconnaissance, planning, and execution is increasingly blurred.

Strategic Implication

Security teams should not evaluate AI risk as a standalone category.

Instead, they should ask:

Where do these capabilities exist within our environment?

How are they being accessed and used?

What controls exist at the interaction and output layer?

Ultimately, the risk is not the model itself. It is the capability it exposes, and how easily that capability can be directed toward malicious outcomes.

Built for CISOs and security leaders navigating real-world risk.

Get Weekly AI Threat Intelligence Used by CISOs

The Broader Research Landscape Confirms the Trend

The CIS findings align with a wider body of emerging research:

Prompt Injection and Jailbreak Vulnerabilities

- OWASP has identified prompt injection as a primary LLM risk category.

- Microsoft research highlights the rapid evolution of jailbreak techniques.

- Academic studies show that adversarial prompting can consistently bypass safeguards.

The interaction layer, not the infrastructure layer, is becoming the primary attack surface.

MITRE and DHS Perspectives

- MITRE is actively expanding threat modeling frameworks to include AI-enabled attack paths.

- The U.S. Department of Homeland Security has noted that extremist actors are exploring AI for operational planning.

These are not edge-case observations. They represent institutional recognition of a shifting threat paradigm.

Emergence of Agentic AI Risks

The next phase of risk is already visible:

- Autonomous agents capable of executing multi-step tasks.

- Integration with APIs, tools, and real-world systems.

- Ability to simulate attack workflows before execution.

This moves the risk from information exposure to execution enablement.

Most enterprise security programs are structurally unprepared for AI-driven threats.

Get the AI Security Readiness Checklist

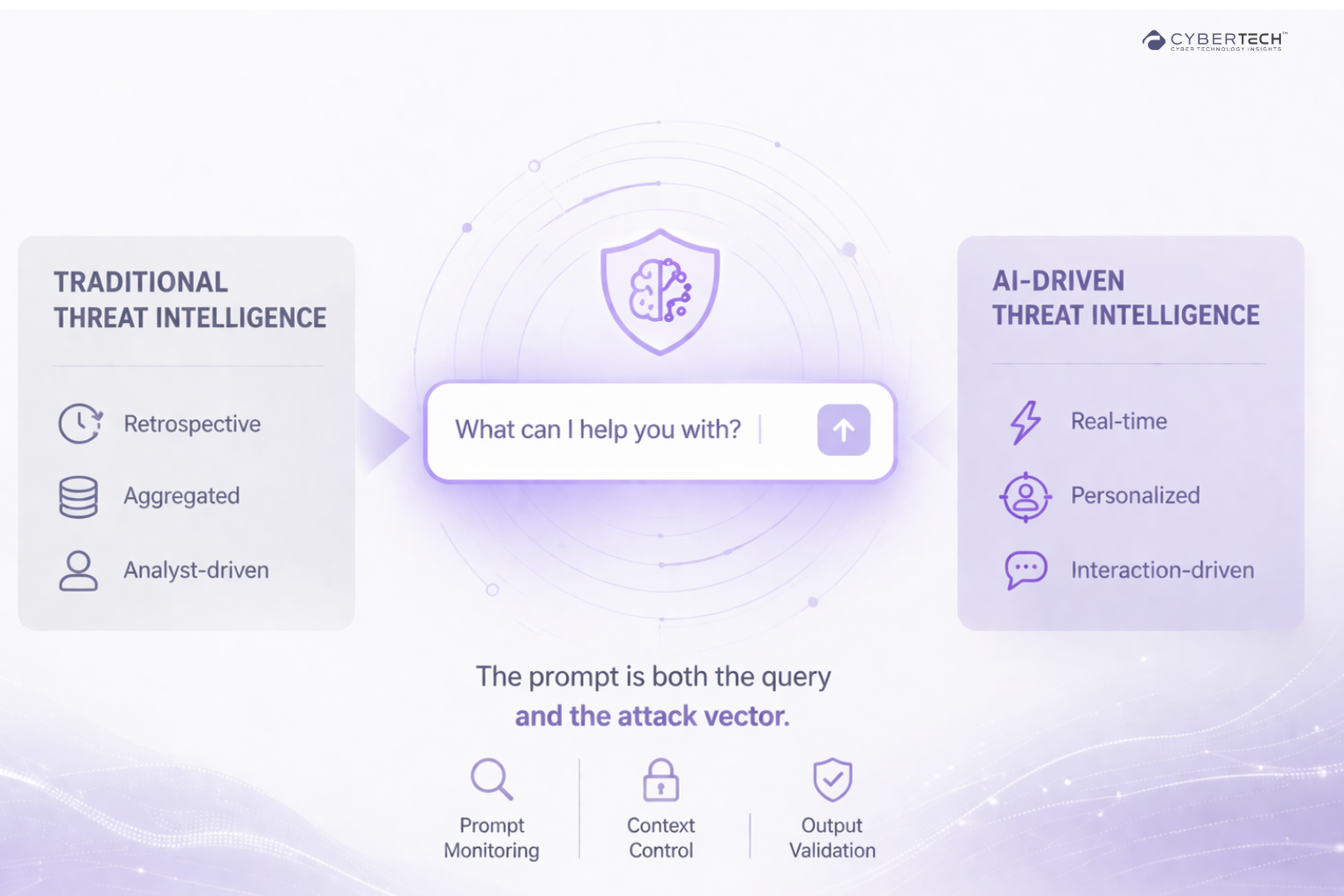

A New Model: Prompt-Level Threat Intelligence

What emerges from this shift is a new intelligence paradigm.

Traditional Threat Intelligence

- Retrospective

- Aggregated

- Analyst-driven

AI-Driven Threat Intelligence

- Real-time

- Personalized

- Interaction-driven

The implication is significant. The prompt becomes both the query and the attack vector.

This introduces entirely new considerations:

- Prompt monitoring

- Context control

- Output validation

AI Security Vendor Landscape

The AI security market is evolving rapidly, but not all solutions address the same layers of risk. Security leaders must evaluate vendors based on how effectively they mitigate real-world AI threat vectors.

| Capability | Why It Matters | What to Look For |

|---|---|---|

| Prompt Security | Prevents injection and jailbreaks | Input filtering, context isolation |

| Runtime Monitoring | Detects misuse in real-time | Behavioral analytics |

| Output Validation | Prevents harmful responses | Policy enforcement layers |

| AI Visibility | Tracks usage across org | Shadow AI detection |

| Enterprise Readiness | Scales across teams | Integrations, governance |

Implications for Enterprise Security Strategy

This is not a niche AI issue. It is a structural change in how threats are generated and executed.

1. Visibility Must Extend to AI Interactions

Organizations need to:

- Map where AI is being used.

- Monitor how it is being used.

- Understand what is being generated.

2. Threat Models Must Be Expanded

Existing models do not account for:

- Prompt injection attacks.

- AI-assisted planning.

- Dynamic intelligence generation.

These must now be integrated into core risk frameworks.

3. OSINT Becomes a Primary Risk Surface

The CIS report emphasizes that AI systems are trained on publicly available data.

This creates a direct linkage:

- Public data → training data.

- Training data → targeting intelligence.

4. Human Behavior Remains a Critical Variable

The report’s recommendations highlight:

- Digital footprint management.

- Awareness of data exposure.

- Training on emerging threat vectors.

Even in an AI-driven threat landscape, human behavior remains a key vulnerability layer.

Talk to an AI Security Expert

Conclusion: A Compression of Time, Skill, and Access

Generative AI is not introducing entirely new categories of threats.

What generative AI is doing is far more consequential than simply enhancing productivity. It is fundamentally reshaping the economics of threat activity.

By compressing the time required to plan attacks, reducing the level of expertise needed to execute them, and expanding access to structured, actionable intelligence, AI is lowering the barriers that once constrained malicious operations.

The result is not just faster attacks, but more accessible, repeatable, and scalable threat capabilities that can be leveraged by a far broader range of actors.

This changes the economics of threat activity.

FAQs

1. How is generative AI changing cybersecurity risk at an enterprise level?

Generative AI is compressing the time, skill, and access required to execute attacks. It transforms fragmented threat capabilities into a unified intelligence layer, enabling faster planning, scalable reconnaissance, and more accessible attack execution.

2. What are the biggest generative AI cybersecurity threats organizations should prioritize?

Key threats include prompt injection attacks, AI-assisted reconnaissance, multi-step attack planning, and cyber-physical targeting. These risks emerge from AI’s ability to generate context-aware, structured intelligence on demand.

3. Why are traditional security models insufficient against AI-driven threats?

Traditional models focus on infrastructure and known vulnerabilities, while AI shifts the attack surface to the interaction layer. Threats now originate from prompts and outputs, making static defenses and guardrails inherently limited.

4. What strategic changes should CISOs make in response to AI-enabled threats?

CISOs must extend visibility into AI usage, integrate AI-specific risks into threat models, monitor prompt-level interactions, and treat AI as an operational risk layer rather than a standalone tool.

5. How does generative AI enable cyber-physical attack convergence?

AI can synthesize OSINT, behavioral data, and geospatial insights to support real-world targeting. This enables attackers to move from digital reconnaissance to physical disruption planning within a single workflow.

To participate in upcoming interviews, please reach out to our CyberTech Media Room at info@intentamplify.com

🔒 Login or Register to continue reading