From AI startups on South Congress to enterprise innovation hubs across downtown, organizations here are deploying artificial intelligence at speed. However, while AI accelerates growth, it also expands the attack surface in ways most teams are not fully prepared for.

Think of it less like upgrading your tech stack and more like stepping into a storyline from Ex Machina or The Matrix.

Powerful systems. Intelligent behavior. Hidden vulnerabilities that are not always visible until it is too late.

What is AI Security?

AI security is the discipline of protecting intelligent systems that influence outcomes.

That includes securing:

- Data that trains and feeds models.

- Models that generate decisions.

- Infrastructure that operationalizes them.

- Applications where those decisions are executed.

AI security is about controlling decision integrity under adversarial conditions. If your AI systems can be influenced, your business outcomes can be influenced.

Why AI Security Has Become a Board-Level Concern

In our cybersecurity growth ecosystem, AI is already tied to:

- Revenue optimization.

- Fraud detection.

- Clinical decision support.

- Customer engagement automation.

- Predictive analytics.

This changes the risk equation. Traditional cybersecurity protects systems and access.

AI security must also protect logic, behavior, and outcomes. The risk is no longer just breach-driven. It is decision-driven.

According to Check Point Software Technologies’ 2026 Cyber Security Report, organizations are now facing nearly 2,000 cyber attacks per week, driven by the combined use of automation, AI, and social engineering across multiple channels.

As AI systems become more interconnected across data pipelines, APIs, and external platforms. No single organization has complete visibility into emerging threats.

Data from the Cybersecurity and Infrastructure Security Agency confirms that the timely exchange of threat intelligence across ecosystems is critical to reducing both the speed and scale of cyber attacks.

“Our state’s growing population and economy depend on a robust and efficient digital infrastructure to deliver services to Texans while protecting state-held data against cyberattacks.” – Rahul Sreenivasan, Director of Government Performance and Fiscal Policy.

Pinpoint Where Your AI Decisions are Vulnerable.

Map Risk Across Models, Data and Critical Systems.

Deconstructing the AI Risk Surface

AI adoption is outpacing governance at scale. Nearly every organization experimenting with AI is already encountering security incidents, often without the controls needed to detect or contain them.

According to data from IBM, 97% of organizations experience an AI-related security incident without proper controls.

From a cybertech strategy perspective, AI expands the attack surface across four interconnected layers:

1. Data Layer: Where Integrity Begins

Data is no longer just an asset. It is behavioral input.

Threat vectors:

- Data poisoning that alters model behavior over time.

- Leakage of sensitive or regulated data through AI interactions.

- Lack of visibility into training data lineage.

Implication: If data integrity is compromised, every downstream decision is compromised.

2. Model Layer: The Decision Engine

Models are not static assets. They are adaptive systems.

Threat vectors:

- Adversarial inputs that manipulate outputs without detection.

- Model inversion exposing sensitive training data.

- Model extraction replicating proprietary intelligence.

Implication: Your competitive advantage can be reverse-engineered or distorted.

3. Infrastructure Layer: The Execution Fabric

AI systems operate across:

- Cloud environments.

- APIs.

- Third-party integrations.

- MLOps pipelines.

Strategic risk: Fragmented ownership leads to fragmented security.

Implication: Security gaps emerge at integration points, not just endpoints.

4. Application Layer: Where Risk Materializes

This is where AI meets business processes.

Threat vectors:

- Prompt injection altering system behavior.

- Unauthorized automation triggering unintended actions.

- Decision manipulation impacting outcomes.

Implication: This is where technical risk becomes financial and reputational risk.

When these layers begin to interact under adversarial conditions, risk stops being theoretical and starts becoming operational.

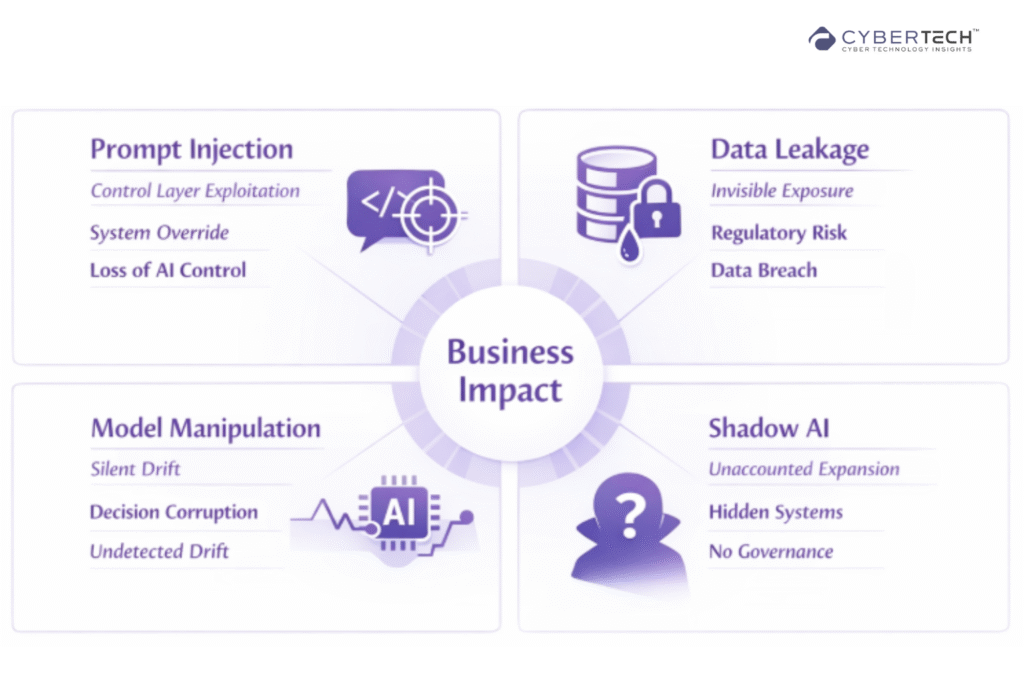

Key AI Threats Through a Strategic Lens

AI threats are no longer isolated technical events. They are coordinated methods of influencing how systems behave and how decisions are made.

To respond effectively, leaders need to understand not just what the threats are, but how they translate into real business impact.

1. Prompt Injection: Control Layer Exploitation

Attackers override system instructions using crafted inputs.

Strategic impact: Loss of control over AI behavior in real-time environments.

2. Data Leakage: Invisible Exposure

Sensitive data surfaces through AI responses.

Strategic impact: Regulatory risk, IP loss, and trust erosion.

3. Model Manipulation: Silent Drift

Gradual influence over model outputs.

Strategic impact: Compromised decision quality without immediate detection.

4. Shadow AI: Unaccounted Risk Expansion

Unapproved AI usage across teams.

Strategic impact: Unmapped attack surface and governance failure.

Benchmark your AI security maturity against high-growth, AI-driven organizations.

Identify gaps that directly impact business risk and scalability.

Control Is the New Security Perimeter

AI has already moved into the core of how modern organizations operate. It is shaping decisions, influencing outcomes, and driving competitive advantage in real time.

AI security is no longer optional. It is a control function at the heart of the business.

Organizations that fail to secure AI are not just accepting technical risk. They are exposing decision integrity, regulatory standing, and market trust.

Unlike traditional breaches, these failures do not always announce themselves. They surface gradually, through flawed outputs, manipulated logic, and compromised outcomes.

FAQs

1. What is AI security, and why is it important for businesses?

AI security focuses on protecting AI systems, data, and decision outputs from manipulation or misuse. It is critical because compromised AI can directly impact business decisions, customer trust, and regulatory compliance.

2. What are the biggest risks associated with AI adoption in enterprises?

The primary risks include data leakage, model manipulation, prompt injection attacks, and lack of visibility into AI usage. These risks can lead to flawed decisions, financial loss, and reputational damage.

3. How is AI security different from traditional cybersecurity?

Traditional cybersecurity protects systems and access. AI security protects decision integrity, model behavior, and data influence. It addresses how outcomes can be manipulated, not just how systems are accessed.

4. What are prompt injection attacks in AI systems?

Prompt injection attacks involve manipulating AI inputs to override intended instructions. This can cause systems to expose sensitive data, execute unintended actions, or produce compromised outputs.

5. How can organizations improve their AI security posture?

Organizations should map AI usage, secure data pipelines, test models against adversarial inputs, and establish cross-domain visibility across systems. Aligning security with business risk is essential for long-term resilience.

To participate in upcoming interviews, please reach out to our CyberTech Media Room at info@intentamplify.com.

🔒 Login or Register to continue reading