In early 2026, Iran crossed a threshold that few modern economies have experienced. The country remained effectively disconnected from the global internet for over 1,000 hours, with connectivity levels dropping to approximately 1% of normal traffic, according to network monitoring data from NetBlocks and infrastructure observations reported by industry sources.

The timing of Iran’s internet blackout aligns closely with broader geopolitical developments, including Operation Epic Fury, during which President Donald Trump publicly urged Iranian citizens to “seize control of your destiny” and assume control of their government.

This was not the result of a cyberattack, infrastructure outage, or cascading technical failure. It was a deliberate, state-enforced disconnection. A policy decision that transformed internet access from a utility into a controlled variable.

Independent measurements reinforce the scale of this event. Data from Cloudflare has consistently shown that during shutdown events, national traffic can collapse to near-zero within minutes, effectively removing a country from the global routing system.

What makes the Iran case strategically significant is not just the duration, which has exceeded 40 consecutive days in some reporting, but the precision with which the shutdown was executed and sustained.

The Internet Is No Longer One Network

Domestic networks and state-controlled platforms continued to function, while access to global services, cloud platforms, and external communication channels was largely eliminated.

This effectively created a bifurcated internet environment. One that operates locally, but is isolated globally.

Research and policy analysis from Freedom House further contextualizes this shift, identifying a broader global trend toward “sovereign internet” models where governments build the technical capability to isolate national networks on demand.

The assumption that the internet is a persistent, always-available layer of infrastructure is no longer universally valid.

Instead, connectivity itself is emerging as a conditional resource, subject to geopolitical dynamics, regulatory controls, and state intervention.

If the network can be deliberately withdrawn, the next question becomes unavoidable.

What are the consequences inside an enterprise environment when that happens?

Why Connectivity Loss Triggers Systemic Enterprise Risk

When a nation-scale internet disruption occurs, the immediate assumption is loss of access. What is less visible, but far more consequential, is how deeply modern enterprise architecture is intertwined with continuous external connectivity.

Over the past decade, organizations have optimized for scalability, flexibility, and speed by externalizing core functions to cloud platforms, SaaS ecosystems, and globally distributed services. This has created an operational model where the internet is not just a transport layer, but a foundational dependency.

Cloud infrastructure is often the first and most visible point of failure. According to Gartner, a significant majority of enterprises now rely on cloud-first or cloud-preferred strategies for critical workloads, with projections indicating that over 85% of organizations will adopt a cloud-first principle for new initiatives.

“There is no business strategy without a cloud strategy,” said Milind Govekar, distinguished vice president at Gartner. “The adoption and interest in public cloud continues unabated as organizations pursue a “cloud first” policy for onboarding new workloads.

In a constrained connectivity environment, access to hyperscale providers becomes inconsistent or entirely unavailable, effectively severing access to applications, storage, and compute resources that are not locally replicated. This is not simply a matter of downtime. It represents a structural gap in architectural resilience.

Identity and access management systems introduce a second layer of fragility. Modern authentication frameworks, particularly those aligned with zero trust principles, are heavily dependent on continuous verification through centralized identity providers.

Okta and similar platforms operate on real-time authentication, token validation, and policy enforcement mechanisms that assume uninterrupted connectivity. When those validation loops cannot reach external identity services, organizations face a paradox.

Systems may remain operational at a local level, but legitimate users can be locked out due to failed authentication checks. In this scenario, security controls themselves become a point of operational failure.

Designing for a World Where Connectivity Is Not Guaranteed

The architectural assumptions that have shaped enterprise systems over the past decade are increasingly misaligned with emerging geopolitical and infrastructure realities.

Cloud-first strategies, centralized identity systems, and globally distributed application architectures were built on the premise of persistent, high-availability internet access. That premise is now under pressure.

Events such as Iran’s prolonged disconnection illustrate that connectivity can be deliberately constrained, not just disrupted. As a result, resilience can no longer be defined solely in terms of uptime or recovery time objectives.

It must also encompass the ability to operate under conditions of degraded or absent external connectivity.

This shift is already reflected in evolving guidance from the National Institute of Standards and Technology. In its zero trust architecture framework.

NIST emphasizes continuous verification and dynamic policy enforcement. However, most enterprise implementations interpret this through a cloud-centric lens, where identity validation and policy decisions are tightly coupled with centralized services.

In a constrained connectivity environment, such dependencies introduce failure points. A more resilient interpretation of zero trust requires local decision-making capabilities, where authentication, authorization, and policy enforcement can continue within a segmented environment, even when external identity providers are unreachable.

Assessing Enterprise Readiness for Connectivity Disruption

If Iran’s prolonged internet blackout exposed anything, it is that most organizations are not designed to operate without continuous external connectivity.

The challenge is not a lack of security controls, but a lack of visibility into how deeply those controls depend on always-on infrastructure. For cybersecurity leaders, the first step is not redesign. It is an assessment.

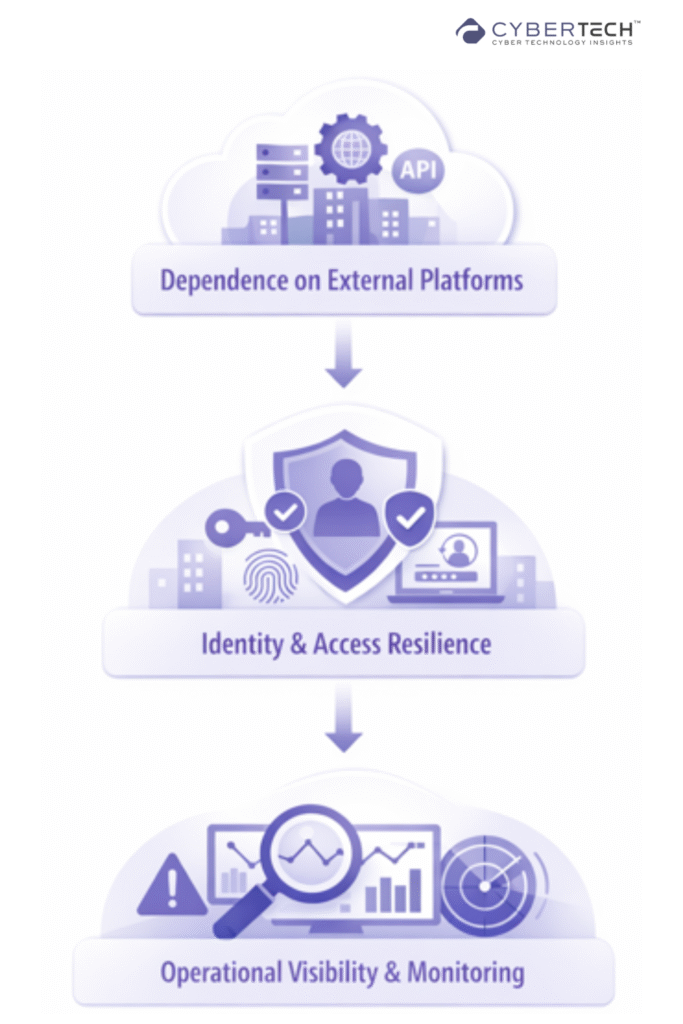

A meaningful readiness evaluation begins with understanding dependency concentration. Over the past decade, enterprises have consolidated critical functions into a relatively small number of external providers, including hyperscale cloud platforms, identity services, and API-driven ecosystems.

Research from the World Economic Forum has consistently highlighted how digital interdependence amplifies systemic risk, where the failure of a single external dependency can cascade across multiple business functions. In the context of a connectivity disruption, this concentration becomes a critical exposure point.

Organizations must identify which systems cannot function without external validation, routing, or computing, and quantify the operational impact of that dependency.

The second layer of assessment focuses on identity resilience. As emphasized in frameworks from the Cybersecurity and Infrastructure Security Agency, identity has become the central control plane in modern security architecture.

However, most implementations assume continuous communication with centralized identity providers.

A robust assessment must examine whether authentication and authorization processes can continue under degraded conditions. This includes evaluating the availability of cached credentials, local policy enforcement capabilities, and fallback authentication mechanisms.

Without these, organizations risk a scenario where systems remain operational, but access is effectively denied to legitimate users.

Operational visibility forms the third critical dimension. Modern security operations depend heavily on real-time telemetry, external threat intelligence, and cloud-based analytics platforms.

According to research from IBM Security, reduced visibility significantly increases both the time to detect and the cost of responding to security incidents.

In a constrained connectivity environment, organizations must assess whether they can maintain baseline monitoring and detection capabilities using locally available data.

This requires understanding which logs, alerts, and signals are retained within the environment, and which depend on continuous external ingestion or analysis.

Closing Analysis: Recovery to Connectivity-Resilient Security

Iran’s prolonged internet shutdown reframes enterprise resilience from recovery-centric to continuity under constrained connectivity.

Traditional security models assume disruption equals compromise or outage. Here, neither applies. Systems remain intact, but external dependencies are severed.

Identity providers, cloud control planes, threat intel feeds, and API endpoints become unreachable. The result is not failure, but operational paralysis driven by dependency.

This exposes a core architectural flaw.

Modern security stacks are built on always-on assumptions. Zero trust implementations rely on continuous authentication. SIEM and XDR platforms depend on real-time telemetry ingestion. IAM workflows require external validation.

When these control planes lose connectivity, security controls degrade into denial of service against legitimate users, while visibility collapses.

In a fragmented network landscape, security maturity will not be defined by how well systems defend against threats, but by how effectively they operate without the network itself.

FAQs

1. How does reduced visibility impact breach detection and response time?

Reduced visibility significantly delays breach detection and containment. According to IBM, organizations without advanced monitoring and automation took up to 324 days to identify and contain breaches, compared to 247 days for more mature environments.

2. Does delayed detection increase the cost of a cyber incident?

Yes. IBM research shows that longer breach lifecycles directly increase financial impact, with prolonged incidents driving higher costs due to operational disruption, regulatory exposure, and recovery complexity.

3. What is the average time to identify and contain a breach today?

IBM reports that the average breach lifecycle is approximately 241 days, even with improved detection capabilities, highlighting persistent visibility and response gaps.

4. How does security automation improve visibility and outcomes?

Organizations using AI and security automation reduce breach lifecycles by up to 80 days and save nearly $1.9 million per incident, demonstrating the direct link between visibility, speed, and cost efficiency.

5. Why is visibility considered a critical control in modern cybersecurity architecture?

Visibility enables faster detection, containment, and response. Without it, threats persist longer, increasing both business disruption and financial loss, which IBM identifies as major contributors to rising breach costs.

To participate in upcoming interviews, please reach out to our CyberTech Media Room at info@intentamplify.com.

🔒 Login or Register to continue reading