AI adoption is accelerating at an unprecedented pace. Enterprises are embedding AI into workflows, decision systems, customer interactions, and even core infrastructure.

Recent industry data shows that while organizations are aggressively investing in AI, most lack visibility into how these systems are actually being used, what data they access, and where risks are emerging.

This disconnect is not a technical gap. It is a business risk.

AI Is Now a Top-Tier Enterprise Risk

AI has become one of the top global business risks, second only to cybersecurity itself. This shift signals something critical.

As reported by Cybersecurity Dive, enterprises are fully aware of the cybersecurity risks associated with AI. Yet, this awareness is not slowing adoption.

Organizations are continuing to scale AI, even as governance and risk frameworks remain underdeveloped.

Enterprises are no longer asking: How can AI improve efficiency?

They are asking: What happens when AI introduces risk at scale?

Know where your AI risk stands today.

Get a structured view of your AI exposure across data, access, and governance.

Request Your AI Risk Assessment

The Warning Signals from Threat Intelligence

AI is being actively leveraged by threat actors to scale attacks, automate reconnaissance, and exploit vulnerabilities faster than traditional defenses can respond.

Recent threat intelligence insights indicate a sharp rise in:

- AI-generated phishing and social engineering.

- Automated vulnerability discovery.

- Faster malware development cycles.

According to CrowdStrike’s Global Threat Report, AI-enabled attacks have surged 89%, with adversaries using AI to accelerate phishing, malware development, and reconnaissance while compressing breach timelines to under 30 minutes.

Enterprises are accelerating AI adoption across business functions, often without the same level of visibility, control, or security oversight.

The Security Gap No One Is Tracking

One of the most overlooked issues is AI visibility.

Many organizations:

- Do not maintain an inventory of deployed AI models.

- Cannot track where sensitive data is being exposed.

- Lack consistent controls across AI-enabled workflows.

Unapproved tools and embedded AI features operate outside security oversight, creating ungoverned data flows and compliance exposure.

At scale, this becomes dangerous because you cannot secure what you cannot see.

AI Is Expanding the Attack Surface

AI doesn’t just introduce new tools. It redefines how attacks happen.

Threat actors are now using AI to:

- Automate phishing and social engineering.

- Generate malware faster.

- Identify vulnerabilities in real time.

74% of organizations say AI-powered threats are already impacting them, while 60% feel unprepared to defend against them.

Cyberattacks are no longer just increasing. They are becoming faster, smarter, and scalable by design.

AI adoption without governance is unmanaged risk.

See how leading enterprises are building secure AI frameworks.

Download the AI Governance Framework

The Rise of Autonomous Risk

The next phase of AI adoption introduces an even more complex challenge: Agentic AI.

These are autonomous systems capable of:

- Taking actions independently.

- Accessing enterprise systems.

- Making decisions without constant human input.

While they drive efficiency, they also introduce unpredictable behavior and control risks.

Industry discussions are already highlighting that:

- Most enterprises are deploying AI agents.

- Very few are securing them properly.

This creates a new category of risk: Machines acting inside your business environment without fully defined guardrails.

The Governance Gap

The core issue is not technology. It is governance.

Only a small percentage of organizations have:

- Defined AI risk frameworks.

- Integrated security into AI deployment.

- Aligned AI initiatives with business risk models.

Even among companies investing heavily in AI, only a minority are achieving meaningful value. The difference is governance, not spending.

This reinforces a key reality: AI maturity is not about adoption. It is about control.

The “Store Now, Exploit Later” Problem

AI introduces long-term risk that most organizations are underestimating.

Sensitive data shared with AI systems today may not be immediately exploited.

But it can be stored, analyzed, and weaponized later.

This includes:

- Intellectual property.

- Customer data.

- Strategic communications.

As AI capabilities evolve, the value of exposed data increases.

This turns today’s oversight into tomorrow’s breach.

Run a Future Breach Readiness Check

Why Security Must Be Built Into AI Strategy

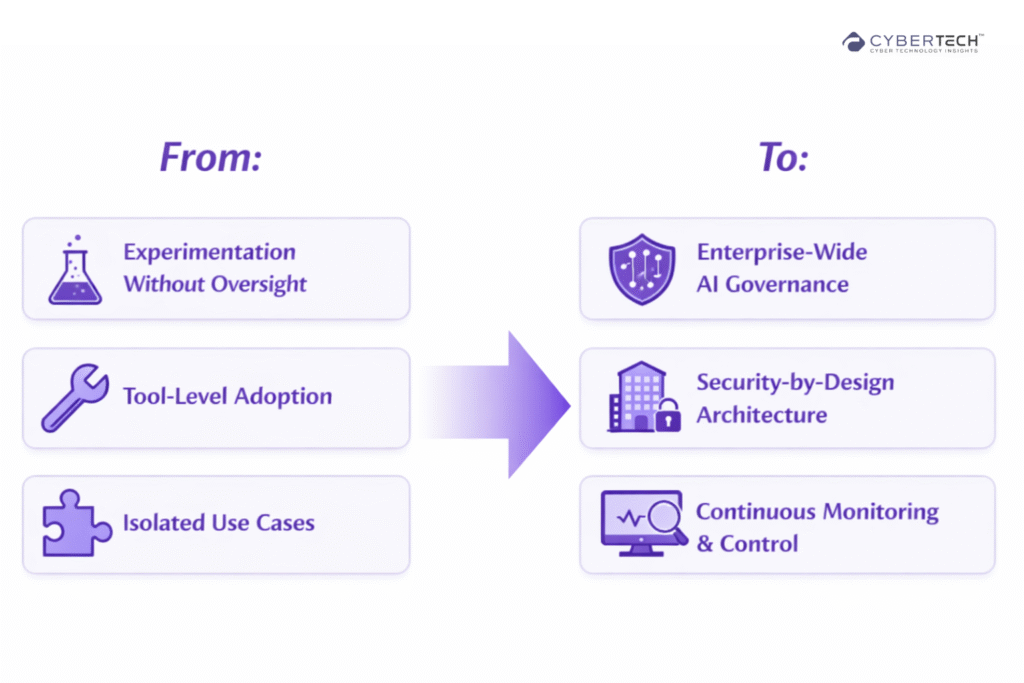

Security cannot be retrofitted into AI. It must be embedded from the start.

This requires a shift in how organizations approach AI:

Once AI is deeply integrated into operations, remediation becomes exponentially harder.

What a Secure AI Strategy Looks Like

To move forward, enterprises must focus on five priorities:

1. AI Visibility and Inventory

Understand where AI is being used across the organization.

2. Data Governance

Control what data AI systems can access and process.

3. Access and Identity Controls

Treat AI systems like privileged users with strict permissions.

4. Continuous Monitoring

Track AI behavior, outputs, and anomalies in real time.

5. Risk-Aligned Governance

Align AI initiatives with enterprise risk management frameworks.

Request an AI Security Strategy Assessment

The Real Risk Is Not AI, It’s Unsecured AI

AI is not inherently dangerous, but unsecured AI is.

The organizations that will succeed are not the ones that adopt AI the fastest.

They are the ones that:

- Understand its risks.

- Build governance early.

- Treat AI as a strategic system, not a tool.

Final Thought

AI is an active force inside your business, making decisions, moving data, and influencing outcomes in ways that are often invisible to leadership.

That changes the stakes.

This is not about technology adoption anymore. It is about control, accountability, and business resilience.

Every unsecured AI system is not just a technical gap. It is a potential point of failure across operations, compliance, reputation, and revenue.

Unlike traditional systems, AI does not fail quietly. It scales mistakes, amplifies exposure, and accelerates impact.

FAQs

1. What are the biggest cybersecurity risks of AI in enterprises?

AI introduces risks across data exposure, model manipulation, and automated attack scaling. It expands the attack surface while reducing the time attackers need to exploit vulnerabilities.

2. Why is AI governance critical for business leaders?

AI governance ensures visibility, accountability, and control over how AI systems operate. Without it, organizations risk compliance failures, data leaks, and unpredictable decision-making at scale.

3. How are cybercriminals using AI to launch attacks?

Threat actors use AI to automate phishing, generate malware, and identify system weaknesses faster. This makes attacks more precise, scalable, and harder to detect.

4. What is “shadow AI” and why does it matter?

Shadow AI refers to unapproved or unmanaged AI tools used within an organization. It creates hidden data flows and security gaps that bypass existing controls and policies.

5. How can enterprises secure AI adoption effectively?

Enterprises must implement AI inventory tracking, data governance, access controls, and continuous monitoring. Security needs to be embedded into the AI strategy, not added after deployment.

To share your insights, please write to us at news@intentamplify.com

🔒 Login or Register to continue reading